We Tested Snyk's Own Demo Repo. Their Scanner Found Nothing.

This one started because of a client.

We'd been onboarding a team that runs a lot of Scala in production. Before we could talk about scanning their actual repos, the obvious question came up: does your tool even work on Scala?

Fair enough. We needed to prove it. So we went looking for open-source Scala projects to run through our scanner.

That's when we stumbled onto Scala Woof.

What is Scala Woof?

Scala Woof is a repository built by Snyk. Not by some random developer. By Snyk themselves. They created it as a deliberately vulnerable application to demo their runtime protection capabilities.

The project has one intentional vulnerability disclosed: a Zip Slip path traversal. The whole point was to plant a known vulnerability, point a scanner at it, and show prospects that it gets caught. Classic sales demo material.

The project has since been archived. The runtime-protection docs it linked to (snyk.io/docs/runtime-protection/) no longer exist. If there were supposed to be more vulnerabilities in there, nobody documented them.

So here we are. A controlled test. A known vulnerability. A repo that a security vendor built specifically to show off their product.

Perfect. Let's see who actually finds it.

Five Scanners, One Repo

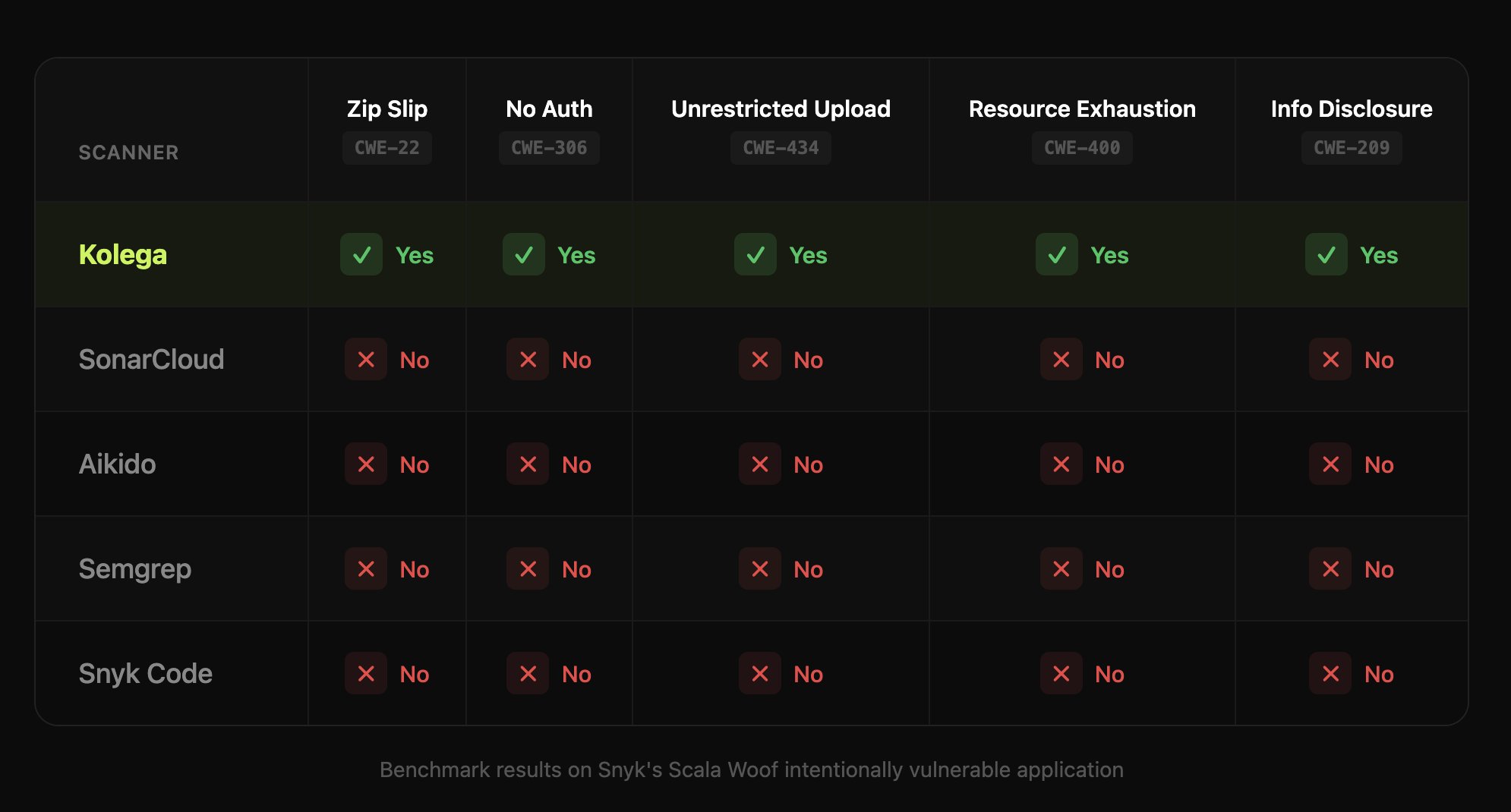

We pointed Kolega.dev, Snyk Code, SonarCloud, Aikido, and Semgrep at the exact same repository. Same code. Same commit. No tricks.

Benchmark Table

Every mainstream scanner found zero vulnerabilities.

Even Snyk's own tool missed the vulnerability in Snyk's own demo app.

I actually ran it twice because I thought I'd messed something up. Nope.

What we found

Our scanner picked up the Zip Slip finding. That was the easy part.

What surprised us was what else came out. The deep code scan flagged four more real issues:

CWE-22: Zip Slip Path Traversal (CVSS 7.7, High). | The application calls |

CWE-306: Missing Authentication (CVSS 8.6, High). | The |

CWE-434: Unrestricted File Upload (CVSS 8.2, High). | The endpoint accepts any file without validation. No size limits, no content type checks, no format validation. Resources get consumed before any validation occurs. Location: |

CWE-400: Resource Exhaustion (CVSS 6.2, Medium). | The extraction logic has no limits on entry count, uncompressed size, or compression ratios. A 42KB zip bomb can expand to petabytes. Location: |

CWE-209: Information Disclosure (CVSS 5.3, Medium). | The J |

We also flagged one finding as a false positive: CWE-377 (overly permissive file permissions). Turned out to be wrong on our part because NIO.2 in Java 7+ implements restrictive permissions by default. We're being upfront about that because it matters.

Snyk Code

Zero findings.

Snyk's own code analysis ran against Snyk's own demo repository. The one built specifically to contain a known vulnerability. Found nothing.

I don't even know what to say about that. The company that built the vulnerable app, for the express purpose of demonstrating their scanning capabilities, shipped a scanner that can't find the vulnerability in their own demo.

SonarCloud

Zero security findings. Flagged some code smells, but nothing security-related. Missed everything.

Aikido

Zero findings on the security scan.

Semgrep

Zero findings. Semgrep ran clean. Not one flag.

Four scanners. Zero findings. One planted vulnerability sitting right there.

The tool that built the demo repo missed it.

This Isn't an Isolated Finding

If this were a one-off result, you could dismiss it as an edge case. Maybe Scala support is weak across the board. Maybe the repo was too small to trigger heuristics.

But we've seen this pattern before. Repeatedly.

When we scanned 45 open-source repositories with traditional SAST tools and our semantic analysis, the results told the same story:

Traditional SAST findings across 45 projects: 1,183

False positive rate: 87%

Vulnerabilities found by semantic analysis: 225

Maintainer acceptance rate: 90.24%

NocoDB: The Same Pattern

We ran Semgrep against NocoDB and got 222 findings. 208 were false positives. And it completely missed the critical SQL injection in the Oracle client. A vulnerability that could lead to complete database compromise. We found it, submitted PR #12748, and NocoDB implemented the fix.

Phase: 9 Authorization Bypasses

Phase is a secrets management tool. The irony writes itself. We found 9 authorization bypass vulnerabilities. One of my favourites was a double-negative logic error: if not user_id is not None. Because of how Python's operator precedence works, that permission check never actually runs. The door is wide open and the code looks like it's locked.

All 9 were fixed in PRs #722 through #731 within a week of our report.

Agenta: The Sandbox That Wasn't

Agenta used RestrictedPython to sandbox user code. Sounds solid. Except someone added __import__ to the safe builtins list. That completely defeats the sandbox. Any user could import os, subprocess, whatever they wanted, and run arbitrary code on the server.

Fixed in release v0.77.1.

None of these were caught by pattern-matching SAST tools. The code compiled. It ran. It looked correct. It just didn't do what it was supposed to.

Why This Keeps Happening

The Scala Woof result isn't a fluke. It's a symptom of how these tools are built.

Pattern-matching scanners look for code that matches known bad signatures. They're basically fancy grep. They see query + userInput and flag SQL injection. They see exec(variable) and flag command injection. That's useful for catching the obvious stuff.

But a Zip Slip vulnerability doesn't look like a known bad pattern. To catch it, you need to understand that:

A zip entry's filename comes from untrusted input

That filename gets used to build a file path

Nobody checks for directory traversal sequences

The file write can escape the target directory

Each line on its own looks fine. The bug lives in the relationship between them. In what the code means, not what it looks like.

That's why Snyk, SonarCloud, Aikido, and Semgrep all missed it. Their scanners saw syntactically valid code that didn't match any flagged patterns. The code compiled fine. It ran fine. It just allowed attackers to write files anywhere on the filesystem.

Semantic analysis works differently. We trace data flow from the zip entry through filename extraction, path construction, and file write operations. We understand that the combination creates a traversal vulnerability even though no individual line looks dangerous.

The Uncomfortable Question

If Snyk's scanner can't find the vulnerability in Snyk's own demo repository, what is it finding in your production code?

This isn't about attacking one vendor. SonarCloud missed it too. Aikido missed it. Semgrep missed it. The entire category of pattern-based SAST tools shares the same fundamental limitation.

The research supports this. Veracode tested more than 100 LLMs across 80 coding tasks and found that 45% of AI-generated code failed security tests. The Stanford study by Perry et al. (2022) found that developers with AI access created more security flaws and were more confident their code was secure.

Meanwhile, the tools that are supposed to catch these problems have an 87% false positive rate and miss the critical vulnerabilities entirely.

We're not saying traditional SAST is useless. It catches the obvious stuff. Direct SQL concatenation, eval with user input, hardcoded secrets. That has value.

But the vulnerabilities that matter most (authorization bypasses, logic errors, unsafe deserialization, race conditions) don't have syntax signatures. They require understanding what code is supposed to do, not just what it looks like.

What We're Doing About It

Every finding in our 45-project research is public. Every assessment includes the exact code locations, CWE classifications, PR numbers, and maintainer responses. You can verify every claim at kolega.dev/security-wins.

The Scala Woof results are documented too.

We don't ask you to trust our marketing. Go look at the GitHub PRs.

225 vulnerabilities. 45 projects. 90.24% acceptance rate from the people who actually maintain that code.

And one demo repo that the vendor who built it couldn't scan.

This analysis is part of Kolega.dev's ongoing security research. All findings are documented with full technical details, disclosure timelines, and maintainer responses. View the complete results at kolega.dev/security-wins.