Your Security Scanner Was the Weapon: The TeamPCP Supply Chain Attack

Thousands of CI/CD pipelines ran regular Trivy scans on March 19, 2026.

Every scan came back clean.

Every scan was also quietly taking cloud credentials.

Someone had turned Trivy, the most trusted open-source vulnerability scanner in cloud-native CI/CD, into a credential harvester. The security tool that was supposed to protect the pipelines became the weapon.

Checkmarx KICS was compromised six days later. Then LiteLLM - the LLM API gateway present in 36% of cloud environments around the world. One stolen GitHub token spread to five ecosystems, 47+ npm packages, and credential exfiltration from at least 1,000 enterprise environments.

This is what a supply chain attack looks like when it happens at scale. And there is one detail about how it was made possible that hasn't been reported clearly enough.

Before March 19: The Door That Was Never Completely Closed

You need to go back weeks before the main attack to understand TeamPCP.

An automated bot named hackerbot-claw found a misconfigured pull_request_target workflow in Trivy's GitHub Actions. In GitHub CI, the pull_request_target event runs in the context of the base repository and its secrets, even when triggered by a fork. If the workflow isn't written carefully, a pull request from a stranger can execute code with access to the repository's secrets.

That's exactly what happened. The bot exploited the misconfiguration and stole a Personal Access Token.

Aqua Security found out and rotated credentials. The incident was disclosed. Case closed - or so it seemed.

But the rotation was incomplete. Some credentials survived. TeamPCP, also tracked as DeadCatx3, PCPcat, ShellForce, and CipherForce, still had access. They waited.

Three weeks later, they used it.

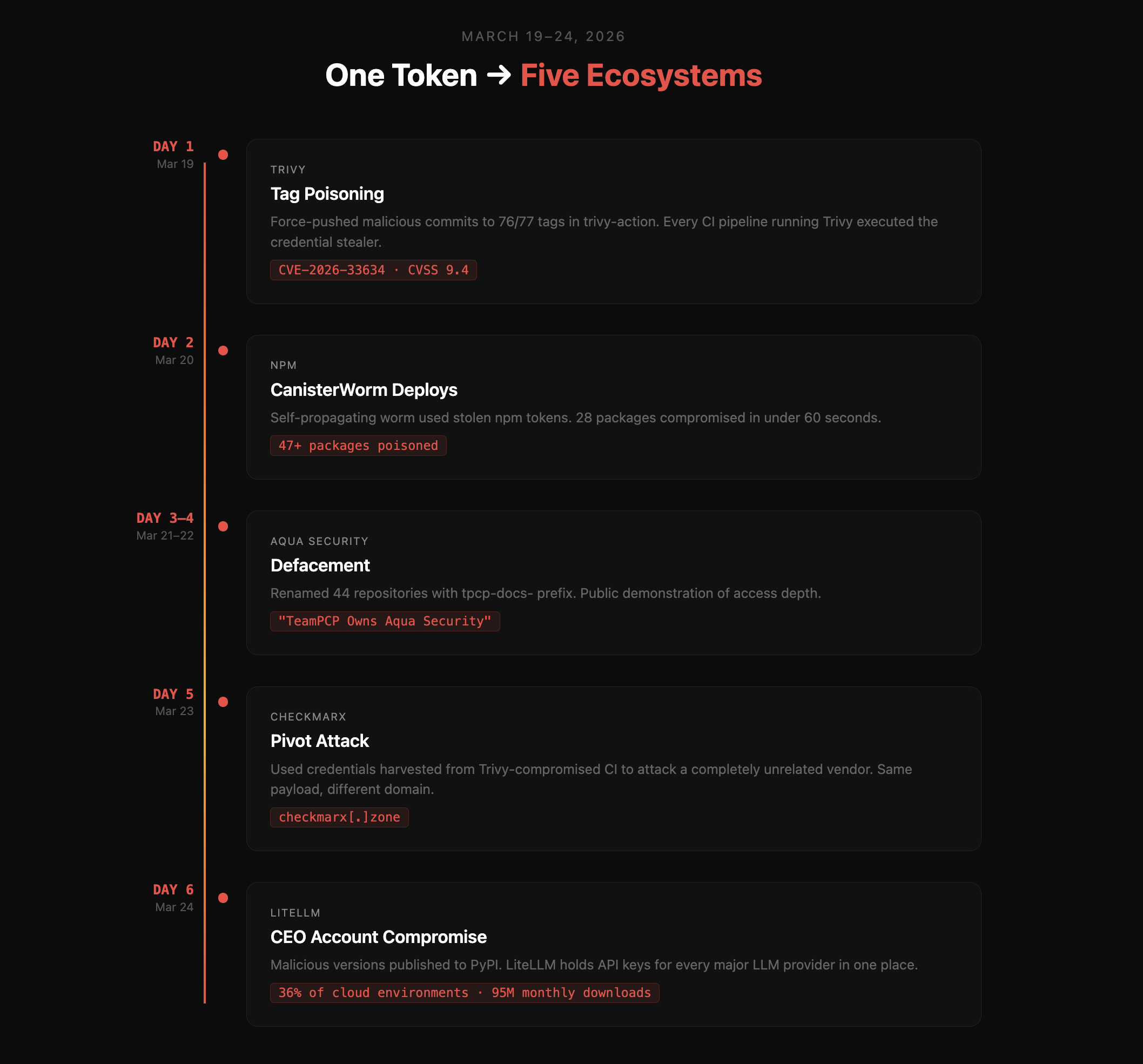

March 19–24: How One Token Became Five Ecosystems

Day 1 - March 19: The Tag Poisoning

Using the credentials that were still valid, TeamPCP compromised Aqua Security's aqua-bot service account. Then they did something technically elegant and deeply dangerous.

They force-pushed malicious commits to 76 of 77 tags in aquasecurity/trivy-action and all 7 tags in aquasecurity/setup-trivy.

Here is why that matters. When you reference a GitHub Action by tag - uses: aquasecurity/trivy-action@v0.20.0 - you are trusting that the tag will always point to the same commit. Most developers believe tags are immutable. They are not. Anyone with push access can reassign a tag to an entirely different commit, and nothing on GitHub's UI will alert the people consuming it. The release history looks clean. The tag name is the same. The code underneath is completely different.

Every CI/CD pipeline running trivy-action then executed the attacker's code on its next run. The legitimate Trivy scan still ran. It returned normal results. Underneath, a credential stealer ran in parallel, invisible.

TeamPCP also published a malicious Trivy binary as an official release: v0.69.4. This was assigned CVE-2026-33634, with a CVSS score of 9.4.

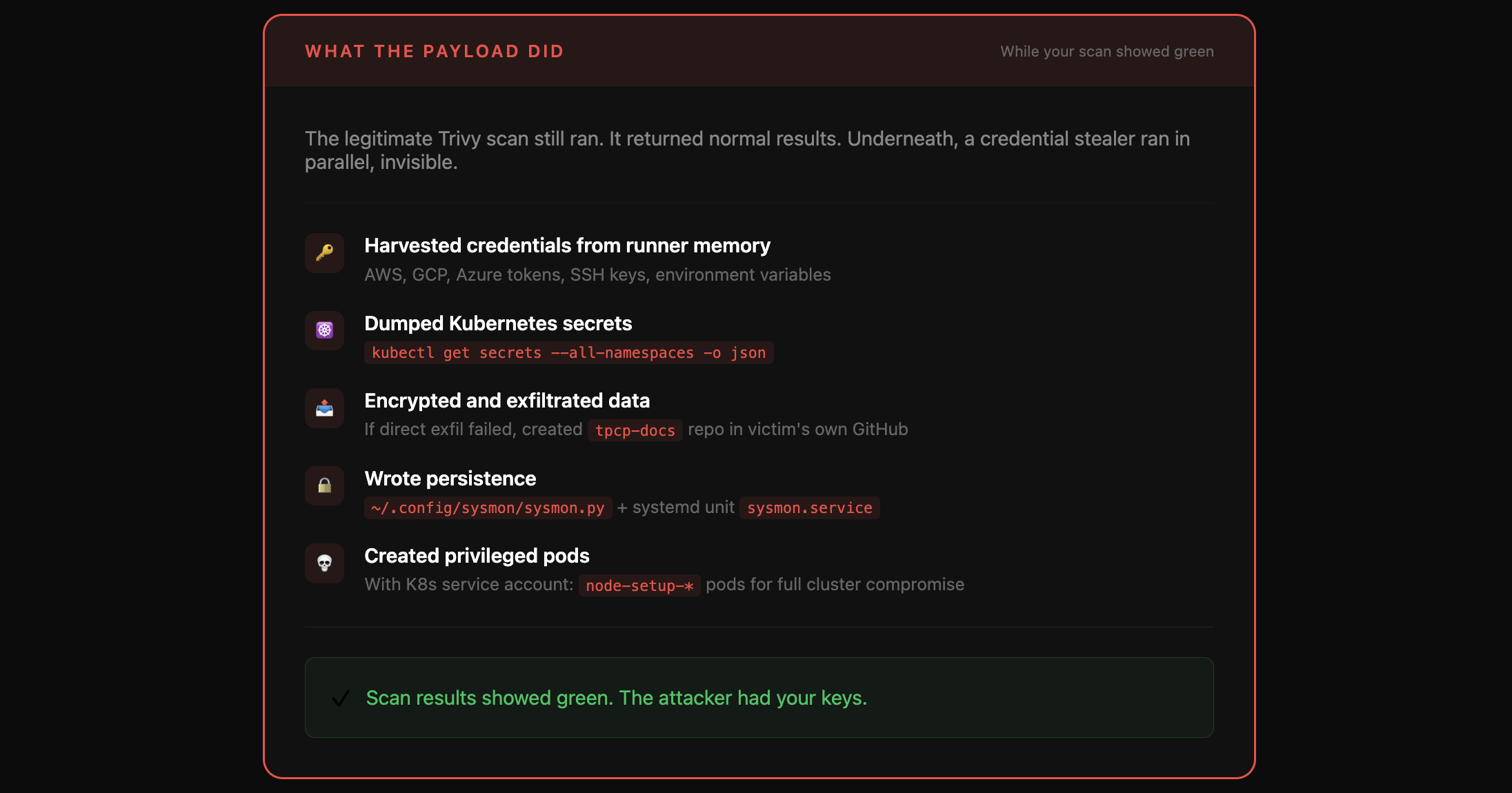

What the payload did, according to Datadog's payload analysis:

Payload

Harvested environment variables, cloud tokens (AWS, GCP, Azure), and SSH keys from runner memory

Executed

kubectl get secrets --all-namespaces -o json- a cluster-wide dump of every Kubernetes secretEncrypted the stolen data and exfiltrated it to attacker-controlled endpoints

If direct exfiltration failed, it created a

tpcp-docsrepository in the victim's own GitHub account and pushed the stolen data there as a release artifactWrote persistence via

~/.config/sysmon/sysmon.pyand a user systemd unit calledsysmon.serviceWhen a Kubernetes service account token was available, it created privileged

node-setup-*pods, turning a package compromise into a full cluster compromise

The scan results showed green. The attacker had your keys.

Day 2 - March 20: 28 Packages in 60 Seconds

With npm tokens stolen from compromised CI environments, TeamPCP deployed CanisterWorm. It was self-propagating: the worm stole npm tokens, resolved which packages each token had permission to publish, bumped patch versions, fetched the original READMEs to preserve appearances, and republished the packages with the malicious payload injected.

Twenty-eight packages were compromised in under 60 seconds. By end of day, 47+ packages across multiple publisher scopes - @EmilGroup, @opengov, and others - had been poisoned. Any developer or CI pipeline that installed an affected package became an unwitting propagation vector.

Day 3–4 - March 21–22: Defacement

Using still-valid credentials and the compromised aqua-bot service account, TeamPCP defaced Aqua Security's internal GitHub organisation. They renamed 44 repositories with the prefix tpcp-docs- and set the description to: "TeamPCP Owns Aqua Security."

This was not just vandalism. It was a public demonstration of access depth - and a warning about how far a single incomplete remediation can reach.

Day 5 - March 23: The Pivot to Checkmarx

This is where the campaign became something different.

TeamPCP used credentials harvested from Trivy-compromised CI environments to attack a completely unrelated vendor. They compromised checkmarx/kics-github-action and checkmarx/ast-github-action, and published malicious versions of two Checkmarx OpenVSX extensions: cx-dev-assist 1.7.0 and ast-results 2.53.0.

The payload was functionally identical to the Trivy stealer. Different action, different domain (checkmarx[.]zone), same underlying kill chain. Sysdig's threat team observed it executing through Checkmarx's AST action and noted that organisations focused on the Trivy advisories would likely have missed this wave entirely - it used a different action, a different domain, and arrived after attention had already moved on.

Rotating Trivy's credentials was not enough, because the stolen tokens had already been used to collect credentials from dozens of downstream environments. The campaign was running on fuel it had already gathered.

Day 6 - March 24: LiteLLM

On March 24, TeamPCP compromised the GitHub account of Krish Dholakia, co-founder and CEO of LiteLLM. Using that access, they published malicious versions of the LiteLLM Python package to PyPI: versions 1.82.7 and 1.82.8.

LiteLLM is not just another Python library. It is the unified API gateway that organisations use to route requests to every major LLM provider - OpenAI, Anthropic, Azure OpenAI, Google Vertex AI, AWS Bedrock. Its entire purpose is to hold API keys for all of them in one place. Wiz Research found it present in 36% of the cloud environments they monitor, and it has approximately 95 million monthly downloads on PyPI. The malicious payload lived inside litellm/proxy/proxy_server.py - not a one-off install hook, but in the package code itself, executing on every import.

LiteLLM is also a transitive dependency. Teams that never deliberately installed it may have had it running in their environments through other packages that depend on it.

As Jacob Krell, Senior Director at Suzu Labs, put it: "TeamPCP did not need to attack LiteLLM directly. They compromised Trivy, a vulnerability scanner running inside LiteLLM's CI pipeline without version pinning."

Six days. One incomplete credential rotation. Five ecosystems.

Timeline

Why Every Detection Tool Missed It

This is the part that security teams need to sit with.

SCA and dependency scanning produced no signal. The malicious code was injected into trusted, signed GitHub Actions. From the perspective of a dependency scanner, the actions were legitimate - they were published by Aqua Security and Checkmarx, the actual maintainers of those projects. There was no unknown package, no suspicious new dependency to flag.

Domain reputation filtering failed. The typosquat domain scan.aquasecurtiy.org - note the deliberate misspelling - was newly registered and had a clean reputation score at the time of the attack. By the time blocklists updated, the window was already open.

Tag-pinned references gave false confidence. Most teams believe that pinning an action to a version tag is safe. It is not. Tags in Git are mutable references. Pinning to a tag name is not pinning to a commit. The only way to guarantee that a GitHub Action runs the code you reviewed is to pin to a full commit SHA.

The one thing that actually worked was runtime behavioral detection. Sysdig's Falco rules caught both the Trivy and Checkmarx waves - not because they knew about TeamPCP, but because the underlying behavior is observable regardless of how the attacker entered. A CI runner process uploading encrypted binary data to an external domain that was not part of the original workflow is anomalous. System calls don't lie, even when package signatures do.

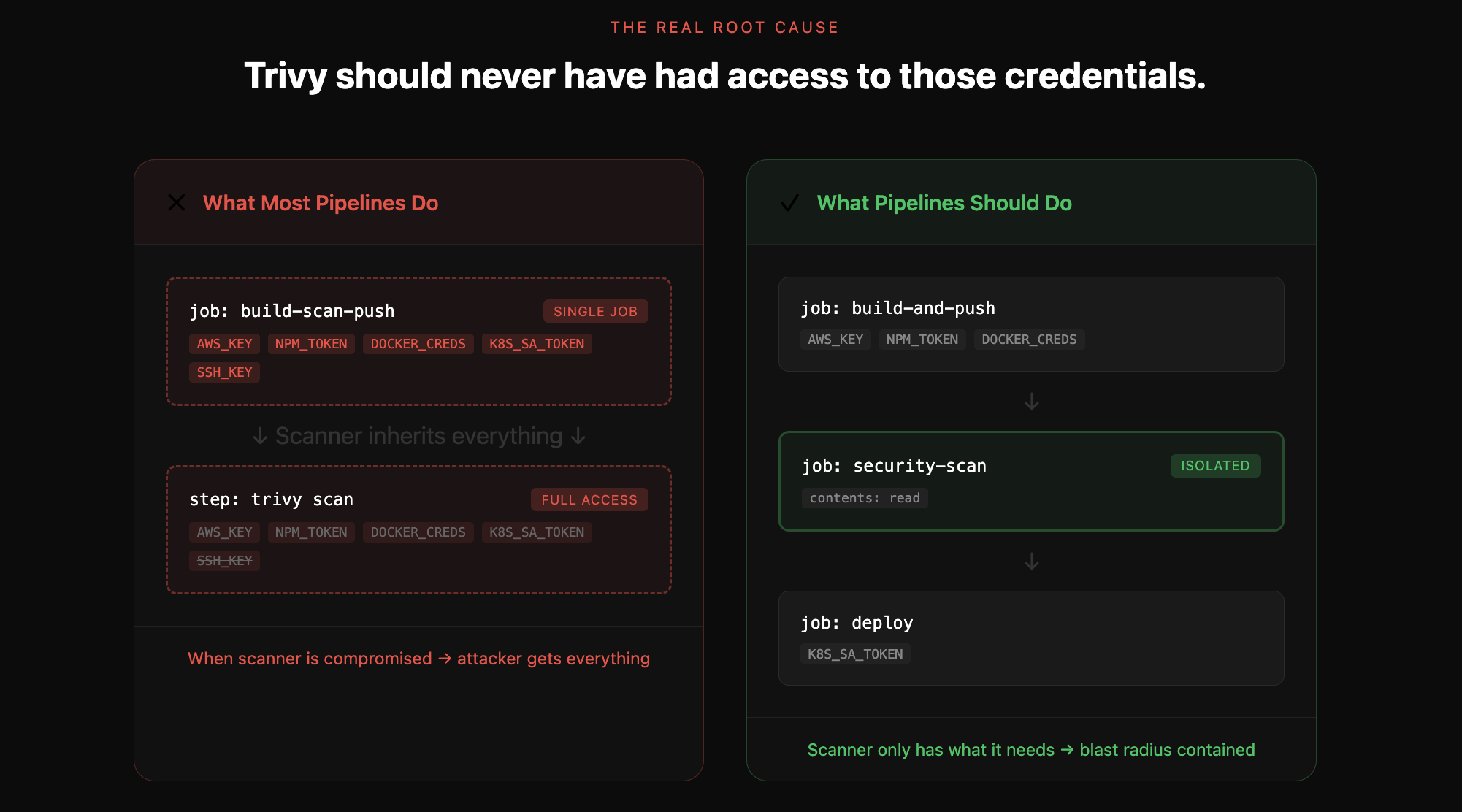

The Real Root Cause Nobody Is Saying Clearly Enough

Here is what most of the post-incident analysis has underreported.

Trivy should never have had access to those credentials.

A static code scanner has one job: read code and report on what it finds. It does not need AWS keys. It does not need npm publish tokens. It does not need Docker registry credentials, SSH keys, or Kubernetes service account tokens. A scanner needs read access to code and nothing else.

The reason Trivy had access to all of those things is a textbook least-privilege violation. In the vast majority of affected environments, build, scan, and push were running in the same CI job, in the same environment, sharing the same credentials. When the scanner was compromised, it inherited everything the build and deploy steps could touch.

This is not an exotic misconfiguration. It is the default.

Root Cause

Why? Two reasons.

Convenience. The standard pattern shown in most Trivy setup guides, blog posts, and starter templates is a single workflow job: build the container, push it, then scan it in the same step. Fewer lines of YAML. No artifact passing between jobs. Easier to reason about in a single file. Splitting scan into a separate job with its own isolated permissions adds complexity and execution time, and most documentation doesn't push you toward it.

Defaults and documentation. Trivy's own GitHub Action documentation historically presented it as a step inside an existing workflow - not as an isolated job with its own scope. Developers copy what the docs show. Most never stop to ask what secrets their scanner can see.

The result: millions of CI pipelines configured in a way that hands a security tool far more access than it needs. When that tool gets compromised, the attacker doesn't just get one pipeline. They get everything the pipeline touches.

Where Kolega Sits in This

We are not going to claim we would have stopped TeamPCP. That would be dishonest.

What we can say is that the architecture we chose from the beginning - and the reasons we chose it - turn out to matter here.

Kolega connects to your repository via a GitHub App with minimal OAuth-scoped permissions: read-only access to code. That is all we ask for, because that is all we need. We have no access to your CI pipeline credentials, your cloud tokens, your npm publish keys, or anything else your build and deploy processes touch. Our analysis runs in an isolated environment that is completely separate from your pipeline execution.

We only need to read code. So we only ask to read code.

This is not a security product pitch. It is the practical outcome of following the same least-privilege principle that the affected teams were not following in their pipelines.

What we are working on next is making this principle enforceable for others. We are building CI/CD pipeline analysis specifically to flag this class of misconfiguration - cases where a scanner or security tool is running in the same job context as build and deploy credentials - before it becomes an attack surface. We will share more on that soon.

What to Do Right Now

If you ran Trivy, Checkmarx KICS, or LiteLLM between March 19 and March 24, treat your environment as compromised.

Immediate actions:

Rotate every secret accessible to CI/CD pipelines that ran these tools during that window. AWS keys, npm tokens, Docker credentials, SSH keys, Kubernetes service account tokens, GitHub tokens - all of it. Do not triage. Rotate everything.

Check your GitHub organisation for any repository named with a

tpcp-docsprefix. This is the fallback exfiltration mechanism - if the primary C2 endpoint was unreachable, the payload created a repository in your own account and pushed stolen data there as a release artifact.Search runner logs for these indicators:

scan.aquasecurtiy.org, 45.148.10.212, checkmarx[.]zone, /tmp/runner_collected_, tpcp.tar.gzOn any system running LiteLLM, check for

litellm_init.pth- this is how the persistence mechanism installed itself via Python's.pthpath configuration file, which executes automatically at Python interpreter startup.Pin to safe versions:

litellm==1.82.6(last known clean),Trivy v0.69.3or earlier.

Structural changes:

Separate your scanner from your build. This is the single most important thing that comes out of TeamPCP.

Your scan job should run with the minimum permissions required to read code - not the credentials needed to build images, push to registries, or deploy to production. In GitHub Actions, that means a dedicated job with its own permissions block scoped to what scanning actually needs. It adds a few lines of YAML. It dramatically reduces the blast radius if a scanner is ever compromised again.

Audit what your security tooling can see right now. If your scanner has access to AWS keys or npm publish tokens, ask why. In almost every case, the answer is convenience - they inherited those credentials from the surrounding job context without anyone making a deliberate choice.

Pin GitHub Actions to full commit SHAs. Not tag names. SHAs. A tag name like @v0.20.0 can be silently reassigned. A commit SHA cannot. This is the mitigation that would have stopped the tag poisoning phase of TeamPCP entirely.

Enable runtime behavioral monitoring on self-hosted runners. The behavioral signal - a CI process making outbound encrypted POST requests to an unexpected domain - was detectable. Static analysis of the actions themselves was not enough.

And finally: treat incomplete remediation as no remediation. Aqua rotated credentials after the first incident. They didn't rotate all of them. One surviving token was enough for TeamPCP to come back three weeks later and finish what they started. When you respond to a credential compromise, you have to assume everything was accessed and rotate exhaustively, not just the credentials you know were touched.

A Different Kind of Supply Chain Attack

Supply chain attacks usually target the libraries your code depends on. An attacker poisons a package you import, and anyone who updates gets the malicious version. That threat model has been discussed, documented, and partially addressed - dependency scanning, lockfiles, pinned versions.

TeamPCP is a different threat model. It targeted the tools you use to protect yourself.

The organisations most thoroughly exposed to this campaign were the ones doing everything right: running automated vulnerability scans on every build, using trusted enterprise-grade tooling. Their diligence was the attack surface. The more conscientiously a team ran Trivy, the more pipelines executed the credential stealer.

Most security programs were not designed with this in mind. The assumption, often implicit, is that security tools are trusted infrastructure - you add them to protect your code, and that's the end of the analysis. TeamPCP is evidence that this assumption needs to be explicitly revisited.

Your scanner is software. It is written by humans, it lives in repositories, it has credentials and release processes and CI/CD pipelines of its own. When you grant it access, that access is only as safe as the entire chain of trust behind it.

Audit what you've trusted. Separate what needs to be separated. Pin to commit SHAs.

The window for immediate response is closing. The structural work doesn't have a deadline.

If you want to see what semantic code analysis that operates outside your pipeline looks like in practice, the vulnerabilities we've found across 45 open-source projects - and the pull requests that fixed them - are documented on our Security Wins page.