Your "Won't Fix" Backlog Just Became a Zero-Day Catalogue

Every security team has a list. It lives in Jira, in a spreadsheet, in a folder nobody opens anymore. The labels vary. "Defense in depth only." "Not exploitable in isolation." "Low CVSS, defer." "Accept risk."

It is not a list of things that don't matter. It is a list of things your team made a rational decision not to fix. And that decision was rational. Microsoft codified the same logic in their own published servicing policy:

"A bypass for a defense-in-depth security feature by itself does not pose a direct risk because an attacker must also have found a vulnerability that affects a security boundary, or they must rely on additional techniques, such as social engineering."

Defense-in-depth issues, Microsoft writes, "by default will not be serviced." That is the policy of the largest software vendor on the planet. Every CISO, every AppSec lead, every engineering manager has been making variations of the same call ever since.

Tha triage was rational because chaining those deferred items into a real exploit required a skilled human researcher, deep architectural knowledge of the target, and weeks of patient work. The cost of building the chain was higher than any realistic attacker would pay.

That cost just collapsed.

The industry is asking the wrong question

Since Anthropic announced Project Glasswing and Claude Mythos Preview on April 7, every security vendor, every analyst, every CISO has been asking the same question: can we find and patch vulnerabilities faster than AI can find them?

The answer to that question is no.

Mythos generated 181 working Firefox exploits in a single benchmark where Claude Opus 4.6 generated 2. It found a flaw in FFmpeg that had been sitting in the H.264 codec since 2010. It found a 27-year-old TCP SACK vulnerability in OpenBSD. David Lindner, CISO at Contrast Security, said it cleanly to Fortune: "We've never had a problem finding vulnerabilities. We find them every day. We actually have a pile of them that we just don't fix."

Veracode's 2026 State of Software Security report, drawn from 1.6 million applications, puts numbers on the pile. 82% of organisations carry security debt. 60% carry critical security debt. The average time to close half of an organisation's open vulnerabilities has crept from 171 days to 252 days. Lagging teams fix less than 1% of their backlog per month. That was the state of play before the discovery side got an order-of-magnitude boost.

You cannot outrun that. A bow and arrow does not defend against a stinger missile. The arithmetic of "find it, triage it, route it, fix it, test it, ship it" versus an AI that generates chains in afternoons does not come out in defence's favour no matter how well your team runs its sprints.

So stop asking it.

The right question is different. It is not how many bugs you can find and fix before Mythos does. It is how many of the links in the chains Mythos wants to build you can cut, fast enough that the chains never close.

A chain is an all-or-nothing object

This is the part the current discourse is missing, and it is the part that actually helps.

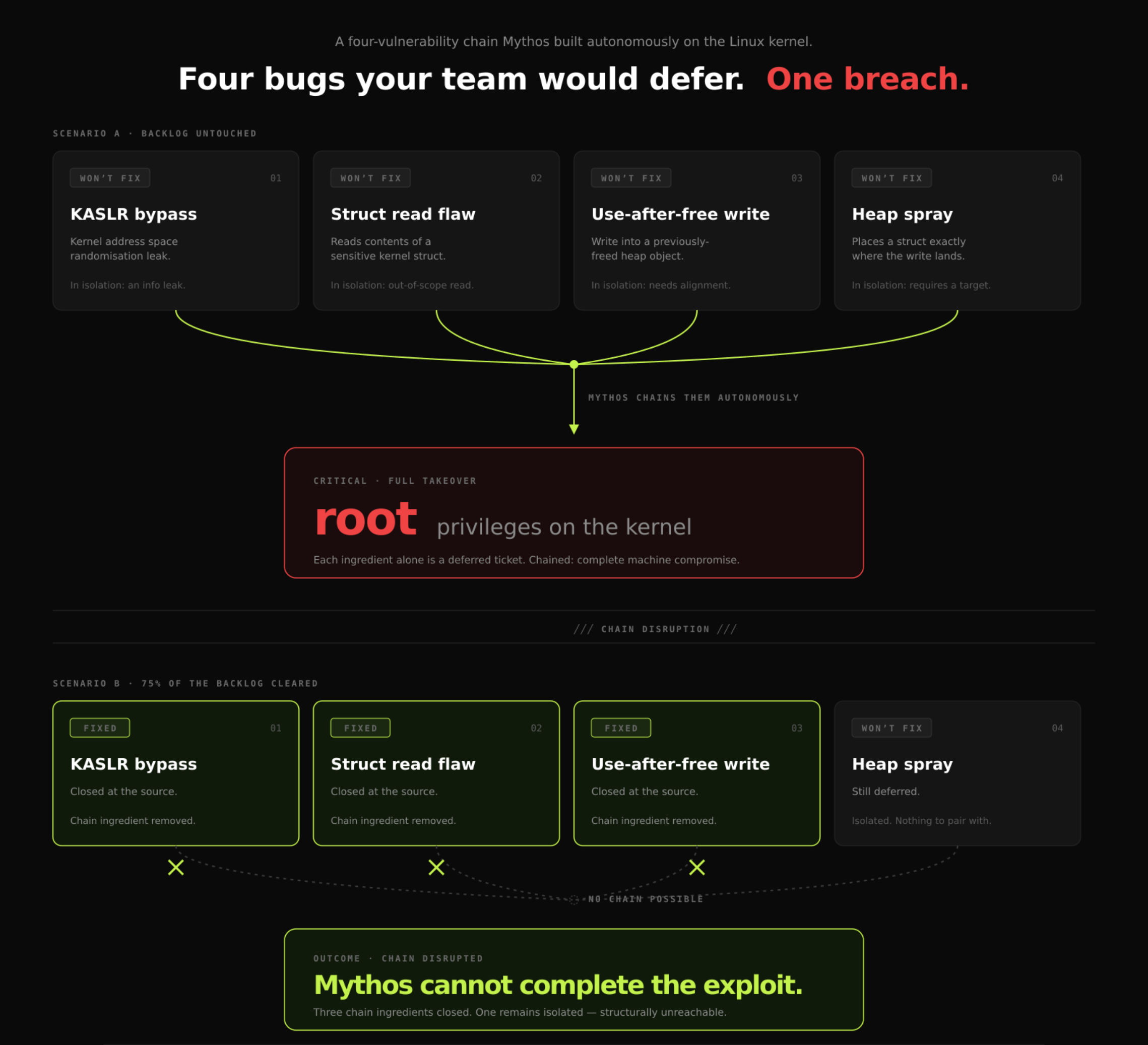

Look at Anthropic's own worked example of what Mythos did on the Linux kernel:

"We have nearly a dozen examples of Mythos Preview successfully chaining together two, three, and sometimes four vulnerabilities in order to construct a functional exploit on the Linux kernel. For example, in one case, Mythos Preview used one vulnerability to bypass KASLR, used another vulnerability to read the contents of an important struct, used a third vulnerability to write to a previously-freed heap object, and then chained this with a heap spray that placed a struct exactly where the write would land, ultimately granting the user root permissions."

Four vulnerabilities. Each one individually is a classic defense-in-depth issue that most teams would defer. KASLR bypass alone is not a breach. Reading a struct alone is not a breach. The use-after-free write alone requires a target that does not naturally exist. The heap spray alone needs something to pair with. Combined: root.

Now the critical observation. Remove any one of those four, and the chain does not complete. Not "the chain is weaker." Not "the attacker has to work harder." The chain does not complete. An exploit chain is not a score you average. It is a circuit. You cut one wire and the bomb does not detonate.

This generalises. The Capital One breach in 2019 was a chain of two: WAF-permitted SSRF plus an overly permissive IAM role. Kill either and there is no breach. ProxyShell in 2021 was three Exchange vulnerabilities that together produced unauthenticated RCE on every internet-facing Exchange server in the world; patch any one of the three and the chain fails. Google Project Zero's August 2019 writeup documented five complete in-the-wild iOS chains using 14 distinct vulnerabilities and each one of those chains was defeated the moment a single link in it was closed.

Exploit chains are fragile the way the chains themselves are fragile. That is their structural property. This is not a thesis, it is how offensive security has always worked.

Chain Diagram

This is the opening

Here is what follows from that, and it is the opposite of the defeatist framing the industry has slid into this week.

You do not need to fix every deferred issue. You need to fix enough of them that no completable chain can be assembled from what remains. That is a dramatically easier target. It is not "empty the pile." It is "cut enough wires."

In practice, remediating 75% of the defense-in-depth issues in a codebase does not give you 75% protection. It gives you close to complete protection against chain construction, because every remaining "Won't Fix" item is now an isolated node in a graph whose neighbours are gone. An attacker, whether human or a Mythos-class agent, cannot build a working exploit out of a single dangling bug. They need the adjacent bugs too, and those have been closed.

This is airgapping in its classical sense. You do not have to put up a perfect wall. You have to separate the pieces so they cannot reach each other. In kinetic terms: you only need to cut one wire to disarm a bomb. In this case we are not cutting one, we are cutting four out of five, and the protection that buys is disproportionate to the work.

And the maths of doing that work has become tractable in a way it never was before.

The throughput that makes it tractable

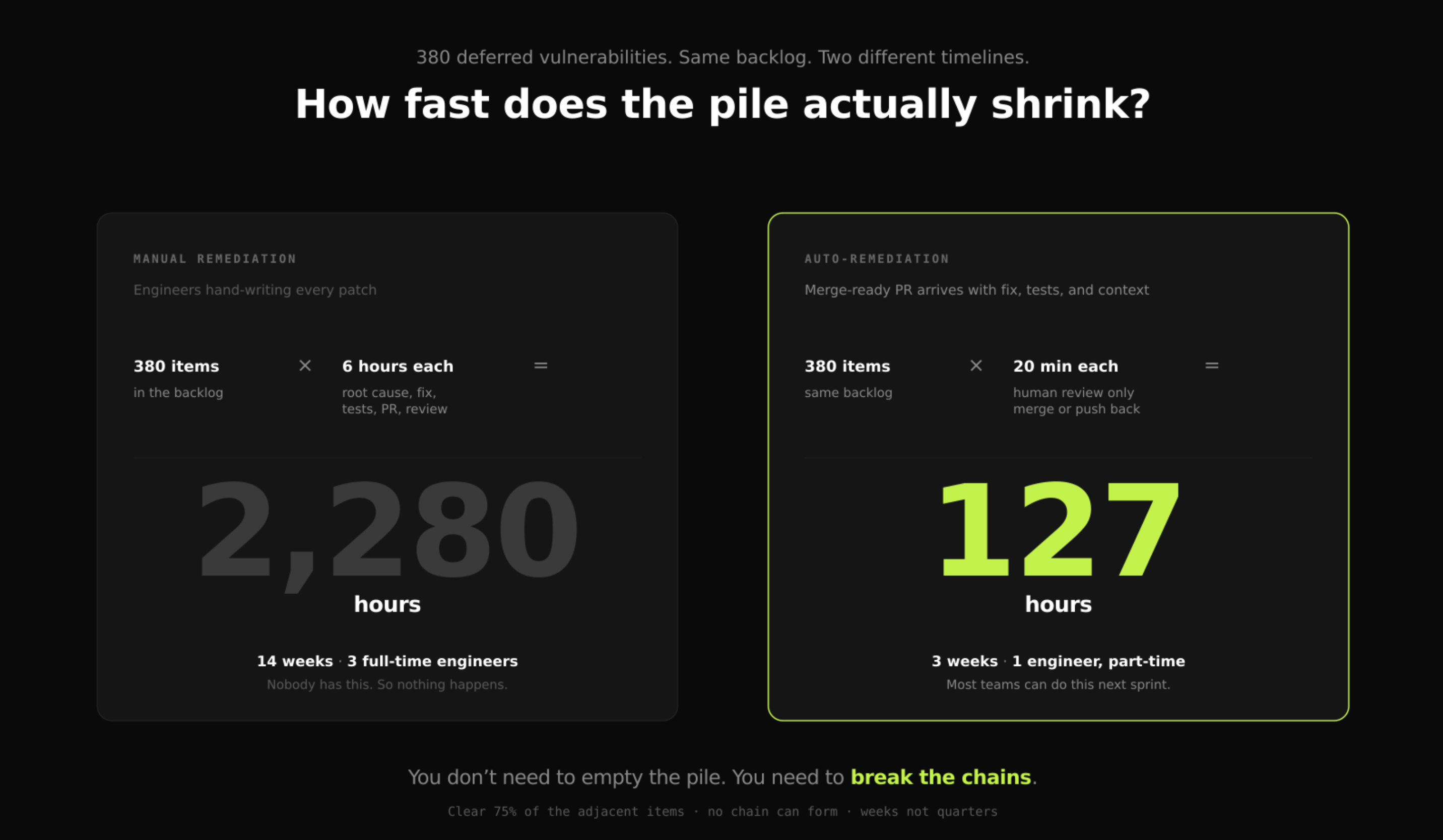

Pretend you sit down with your team and accept the argument. You agree the pile needs revisiting. You have, say, 380 deferred items across your repositories. The team estimates a clean, tested fix for an average defense-in-depth issue takes four to eight hours of senior engineering time. Call it six.

That is 2,280 hours. A team of three full-time engineers doing nothing else for fourteen weeks straight.

Nobody has fourteen weeks. Nobody can spare three engineers. That is exactly how you got to 380 deferred items in the first place. The backlog grew not because anyone thought those issues did not matter, but because the team was already saturated when each one landed. The industry has been running for years on a throughput problem dressed up as a triage problem.

The throughput maths only changes if the cost per remediation collapses. And the only way that collapses is if the heavy lifting: root cause analysis, fix design, test writing, regression checks, PR drafting which happens before the human ever opens the ticket. A maintainer can review a PR in twenty minutes. They cannot write the same PR in twenty minutes. The compression is not "find faster." It is "show up with the work already done."

Run the numbers again with that compression. 380 items × 20 minutes of human review = 127 hours. Three weeks for one engineer. That is the work of a single sprint, not a quarter-long project. And because you are specifically targeting the defense-in-depth class, the items that sit structurally adjacent to something worse, you do not need to clear all 380 to break the chains. You need to clear the 75% that matter. Sixty or so hours. Less than two weeks of part-time work.

Throughput math

Why the chain disruption argument holds

There are two separate things to believe for the argument above to work. One is that today's scanning and discovery tooling has a structural blind spot large enough that the backlog contains serious chain ingredients. The other is that closing those ingredients meaningfully frustrates chain construction. We have published evidence for the first. The second follows from how remediation works.

On the blind-spot question, we published RealVuln in April 2026, an open-source benchmark comparing fifteen scanners across rule-based SAST, general-purpose LLMs, and specialist security tools on 796 hand-labelled vulnerabilities in 26 real-world Python repositories. We weighted the scoring on F3, a recall-heavy metric that treats a missed vulnerability as nine times worse than a false positive, because in production an undetected flaw is how you lose and a false positive costs analyst time.

The result was a three-tier hierarchy with real separation. Semgrep: F3 of 17.7. Snyk Code: 17.4. SonarQube: 7.1. The general-purpose LLMs Claude Sonnet, Gemini, Grok, Kimi landed in the 40s and 50s. Specialist tools with semantic analysis occupied the top tier. Everything is at realvuln.kolega.dev, every label and scanner output is in the public repo, and the methodology is designed to be audited.

What that benchmark proves is narrow but important: the tools most teams have relied on for years miss a very large fraction of the vulnerabilities that are demonstrably present. The "Won't Fix" backlog is only the conscious part of the problem. Underneath it is a second, larger layer of defense-in-depth issues that were never flagged at all because the scanner was structurally incapable of seeing them. Those unseen issues are exactly the class of bug a Mythos-class system with semantic understanding will now find first.

The chain disruption argument itself does not need benchmark data, because it follows from the definition of remediation. A chain is a circuit. Cut any link and the circuit does not close. When a KASLR bypass is properly closed at the source, the attacker does not get a weaker KASLR bypass, they get no KASLR bypass. The ingredient is gone. The graph of reachable chain components fragments. That is what airgapping means at the code level. It is not a firewall. It is the removal of the thing the attacker was going to use.

Combine the two and the picture is this: you have a conscious backlog of deferred items, and almost certainly an unconscious one of items nobody has surfaced yet. Every one of those is a potential chain ingredient. Closing them properly at the source, with a structurally sound fix removes them from the graph entirely. You do not have to close all of them. You have to close enough of the adjacent ones that no completable chain remains. That is the work.

The backlog, paradoxically, gets bigger for the things you actually need to solve, the critical items, the business logic flaws, the authentication design decisions that require architectural thinking. That work is still on you. What comes off your plate is the defense-in-depth pile that has been sitting there growing for three years. That pile was load-bearing. Now it can be cleared.

What changes now

The fix is not panic. The fix is not re-opening every closed ticket from the last three years for another round of manual triage that will produce the same deferrals for the same reasons. The fix is treating the deferred backlog as the attack surface it now is, and clearing enough of it to break any chain an attacker might try to assemble.

Three things worth doing this quarter, in order of immediate yield.

Know what is in the pile. If you cannot produce, in under an hour, a complete list of every defense-in-depth and "low CVSS, defer" decision your team has made in the last two years, that is the first problem. You cannot disrupt chains you cannot enumerate. Most teams cannot enumerate.

Find the adjacencies, not the items. A backlog of 400 individual findings is unmanageable. A map of which of those findings sit on the same data flow as something more dangerous is actionable. This is the question semantic analysis is built to answer. It turns a pile into a graph and a graph into a prioritisation.

Close enough of the adjacent nodes to fragment the graph. You are not trying to empty the pile. You are trying to make it structurally impossible for any combination of what remains to form a working chain. Seventy-five percent of the right items, closed properly, disarms the bomb. And with auto-remediation and merge-ready PRs, that work is weeks, not quarters.

This is the only defensive posture that is actually in scale with the new offence. You cannot match Mythos on discovery speed. You do not have to. You can make your code a target where chains do not complete and you can do it fast enough to matter, with a team you already have.

Where to start

This is the work kolega.dev was built for. Not another scanner that adds to the pile but rather a remediation layer that identifies which items are structurally adjacent to something worse, auto-generates tested PRs for the fix, and lets your team clear the chain ingredients at review speed rather than write speed.

We did this 225 times across 45 open-source projects last year. The NocoDB SQL injection Semgrep walked past. The Phase double-negative authorisation bypass. The vLLM unsafe deserialisation. Each one a defense-in-depth issue in isolation. Each one an ingredient in a chain Mythos could now assemble in an afternoon. Maintainers accepted 90.24% of the ones they reviewed. That acceptance rate is not a marketing number, it is what happens when the PR arrives already written, tested, and explained.

If you want to see what your own "Won't Fix" backlog actually looks like once it is mapped as a graph rather than a list; which items are isolated, which are chain-adjacent, which are quietly load-bearing; start with a free scan at kolega.dev. No enterprise contract, no sales call. Connect a repository, run the scan, see your own chain map and the PRs that disrupt it. Most teams get there in under an hour.

The triage assumption was load-bearing. It is not load-bearing anymore. Chain disruption is. That is a fight we can win and it starts with the thing your team has been deferring for three years.