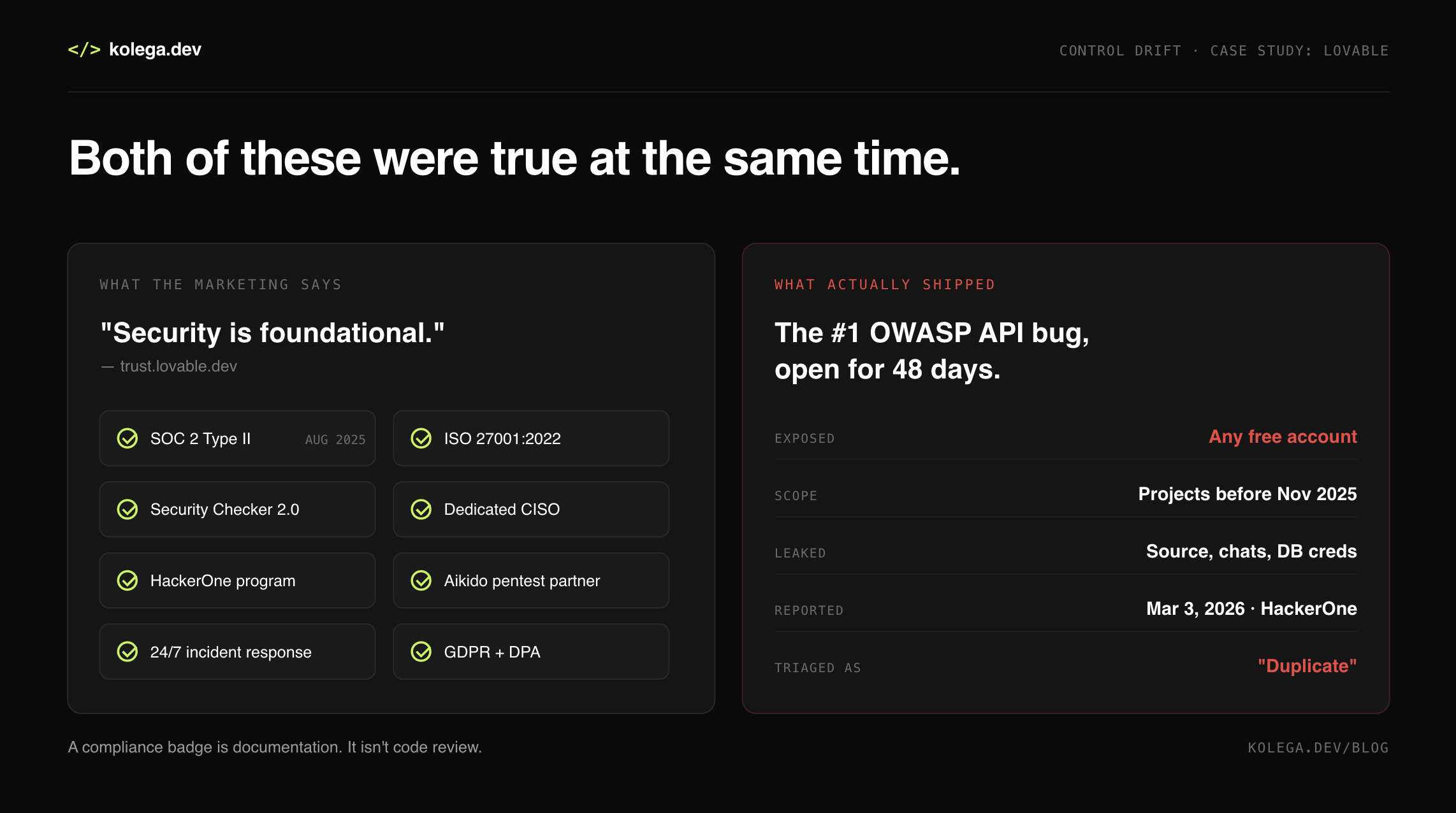

When SOC 2 Type II Compliant Means Anyone Can Read Your Source Code

Lovable's compliance posture, August 2025 to April 2026. And what shipped to production.

The claim: security is foundational

Lovable's Trust Center opens with a sentence the marketing team clearly loves: "At Lovable, security isn't just a feature, it's foundational to everything we build."

The Data Processing Agreement backs it up. Lovable "shall maintain SOC 2 Type II and ISO 27001 accreditations for the duration of the term of the Agreement." The security page lists WAF controls, network isolation, encrypted storage, adaptive rate limiting. Enterprise customers get SSO, SCIM, RBAC, audit logs. There's a Security Checker 2.0. A dedicated CISO. An announcement about partnering with Aikido for penetration testing, published one week before this incident broke.

On August 13, 2025, Lovable announced SOC 2 Type II compliance. Their blog post that day: "We don't just talk about security. We prove it."

They proved something, alright. Just not what they intended.

What actually happened

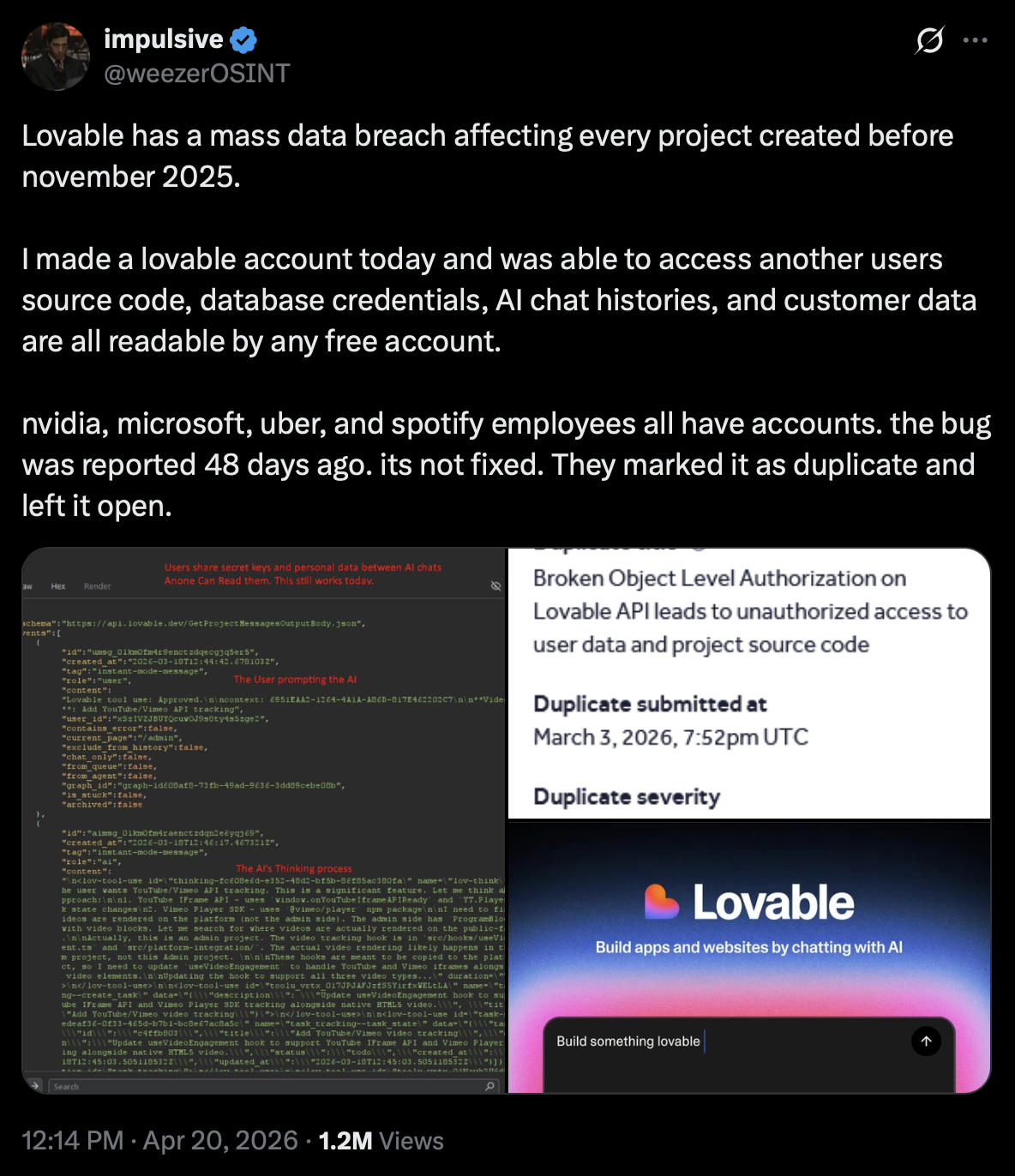

On April 20, 2026, a security researcher going by @weezerOSINT posted a thread on X. The summary is one sentence long and it deserves to be quoted in full:

"I made a lovable account today and was able to access another users source code, database credentials, AI chat histories, and customer data are all readable by any free account."

Tweet 1

No offensive hacking. Five API calls from a free account. That's it.

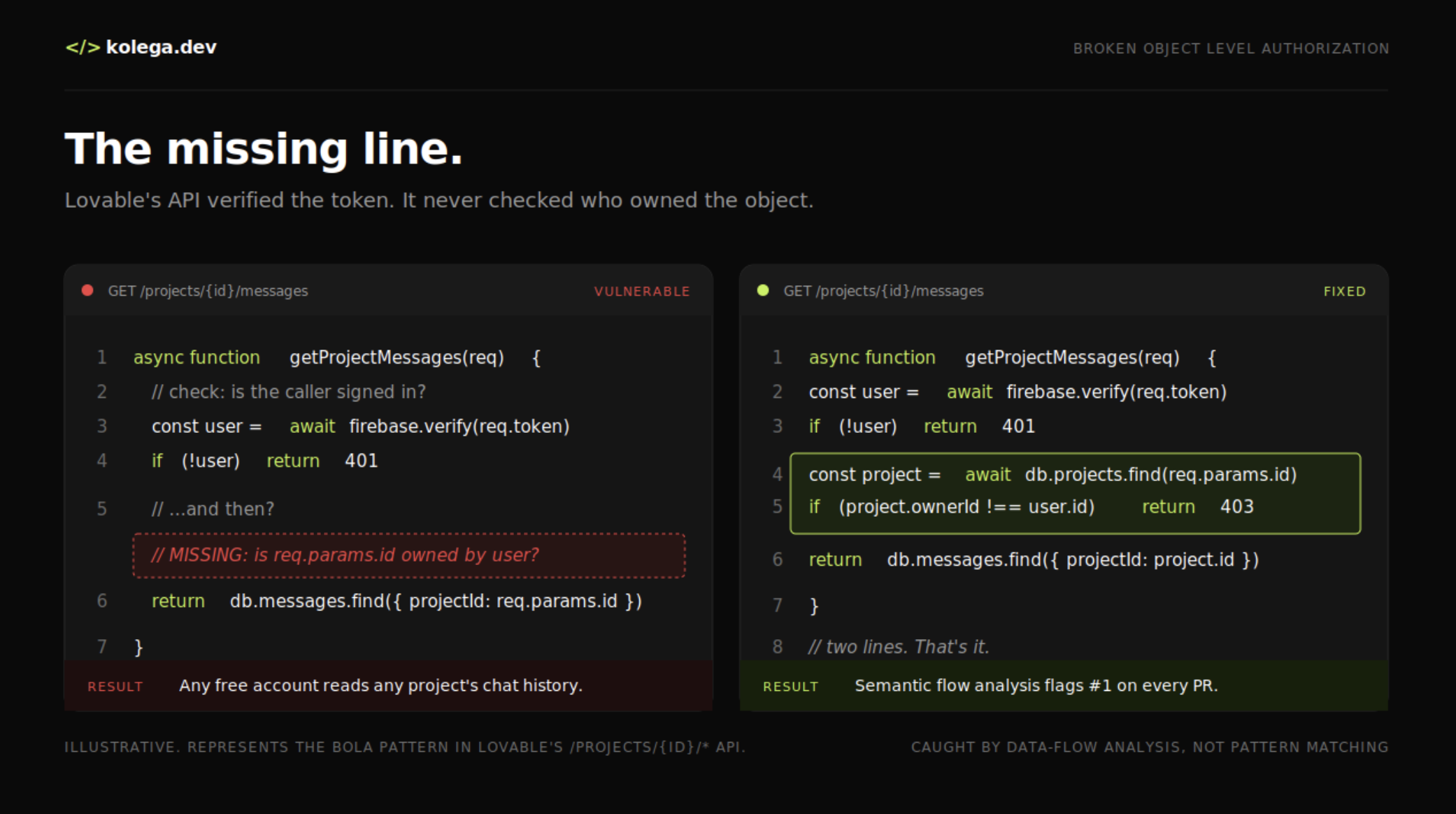

The flaw is a Broken Object Level Authorization bug. Lovable's /projects/{id}/* endpoints verified that you had a valid Firebase auth token. They just never checked whether that token belonged to the owner of the project you were asking about. BOLA is ranked number one on OWASP's API Security Top 10. It's the most common, most documented, most preventable API bug in existence.

What that one missing check exposed:

Every project created before November 2025

Full source code of other users' apps

Complete AI chat histories, including everything developers pasted in mid-session

Database credentials hardcoded into that source code

Real customer data from live apps built on Lovable

The researcher demonstrated it by pulling an active admin panel for Connected Women in AI, a Danish nonprofit. The source code contained hardcoded Supabase credentials. Those credentials returned live records, real names, job titles, LinkedIn profiles, and Stripe customer IDs belonging to people at Accenture Denmark and Copenhagen Business School. Employees of Nvidia, Microsoft, Uber, and Spotify had affected projects too.

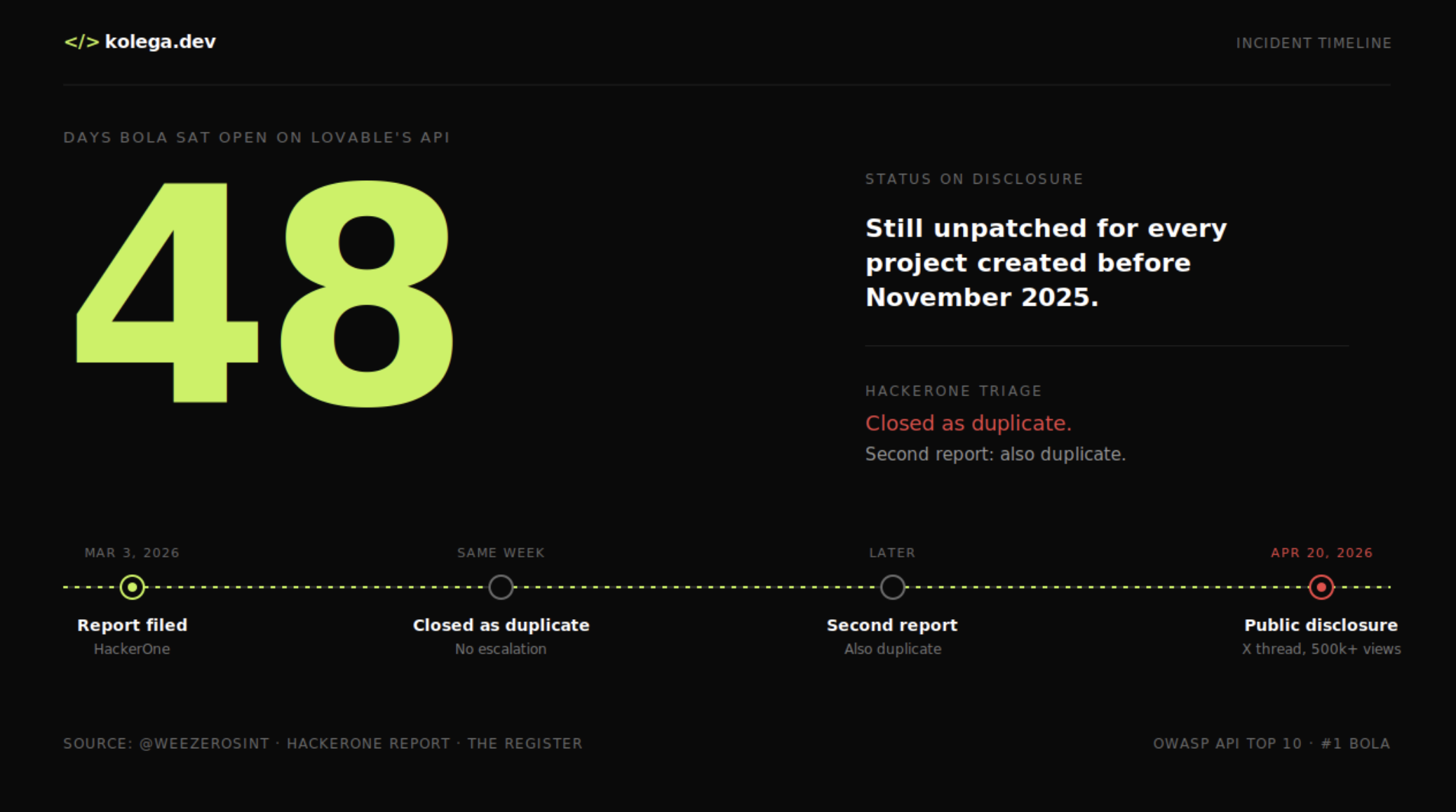

The report was filed on HackerOne on March 3, 2026. Forty-eight days before the public disclosure.

Lovable closed it as a duplicate.

48 days

The response, in three acts

Lovable posted their first statement on X a few hours after the thread blew up. It opens with: "To be clear: We did not suffer a data breach."

Act one was blaming the documentation. "Our documentation of what 'public' implies was unclear, and that's a failure on us." Chat visibility on public projects, they said, used to be the default, and that was confusing.

Act two is the part worth reading carefully: "When it comes to code of public projects: That is intentional behavior. We have experimented with different UX for how the build history is surfaced on public projects, but the core behavior has been consistent and by design."

Intentional. By design. That code, those prompts, those database credentials sitting in other users' source, being readable by any free account, yeah, we meant to do that.

Act three came later Monday night, when the first statement didn't take. Lovable posted a longer version that walked through the history of their public-vs-private tiers. Free tier users couldn't make private projects at all until May 2025. Enterprise got that privilege in May 2025. Everyone got private-by-default in December 2025. Then, in their own words: "In February, while unifying permissions in our backend, we accidentally re-enabled access to chats on public projects."

That re-enablement is what weezerOSINT reported on March 3. HackerOne triagers closed it because they thought reading other people's chats on public projects was the intended behavior, which, given what Lovable had just told the world about public code being by design, is not an unreasonable thing to conclude.

So the reports closed without escalation. Lovable added ownership checks to the API, but only for new projects. Every project created before November 2025 stayed exposed. When weezerOSINT filed a second report documenting additional affected endpoints, Lovable marked it a duplicate too.

HackerOne, for its part, declined to comment.

This is the pattern, and it has a name now

The Register published an opinion piece the day before this broke, titled "I meant to do that! AI vendors shrug off responsibility for vulns." It was about Anthropic calling a Model Context Protocol design flaw that puts 200,000 servers at risk "an explicit part of how MCP stdio servers work." About Google, Microsoft, and Anthropic paying four-figure bug bounties for critical-severity hijacking flaws in their coding agents, then declining to publish CVEs. About the general AI-industry posture of "that's not a bug, it's working as intended" whenever the bug is in the AI product itself.

The Lovable statement that public source code was "intentional behavior" lands in exactly that same bucket. So does "we did not suffer a data breach" while screenshots of other users' chat histories circulate. So does patching only the projects created after the story broke and leaving the rest in the wind.

None of this is about a mistake. Mistakes happen. Permission regressions ship. Bug reports get triaged wrong. What this is about is the response pattern that AI companies have collectively decided works: deny, reframe, and blame something else. In this case, the "something else" was HackerOne.

What SOC 2 Type II actually certifies

Here's the awkward truth about compliance frameworks that almost nobody says out loud: SOC 2 Type II does not certify that your product is secure. It certifies that you documented a set of controls and followed them across an audit window. If your control says "we triage HackerOne reports within 48 hours," and the auditor sees that you did, that control passes, even if the report got closed as a duplicate because a triager misread what the researcher wrote.

The gap between "controls functioned as documented" and "our platform is actually safe to use" is a gap we've written about before. We called it Control Drift: the space between code-generation velocity and governance capacity. Lovable is a textbook demonstration of it.

Consider their stack:

SOC 2 Type II, audited by Prescient Assurance, passed

ISO 27001:2022 certified

A HackerOne program with published triage

A 24/7 incident response team, per their privacy policy

Their own Security Checker, which runs on generated code before publish

A dedicated CISO

All of it, publicly documented at trust.lovable.dev

And on the other side: 48 days of open BOLA on their own platform API, the most common API bug in existence, reported, triaged, closed, and then re-reported. The control plane, not the output. Their own code.

The apps that Lovable generates are the stuff we wrote about last year. CVE-2025-48757, 170 sites, 303 insecure Supabase endpoints, no row level security, user data just sitting there. That was generated-code security. Bad defaults. You could reasonably blame the end users. Some people did.

This one is different. This one is the platform failing to protect users from each other. That's not something you can push downstream to the person who typed the prompt.

What would have caught this

BOLA is the textbook case for a tool that reads code flow instead of code shape. Pattern matchers look at endpoint handlers and see Firebase auth verification and move on. The handler "looks" authenticated. Semgrep flags the absence of a call to auth.verify(); it doesn't flag the presence of auth.verify() followed by a query that forgot to filter by the authenticated user's ID.

Semantic analysis does. It follows the user ID from the token through the handler to the query and asks: did this query filter by the authenticated user, or did it just filter by the object ID from the URL?

Illustrative. The two lines that separate a working API from a public dump of every project on the platform.

That check, run on every PR, would have flagged /projects/{id}/* as a BOLA exposure the first time it was written. Not 48 days after the fact. Not when a researcher with a free account starts posting screenshots.

We know this works because we ran the same playbook on 45 open-source projects and found 225 vulnerabilities traditional SAST missed. Maintainers accepted 90.24% of them. Nine of those findings were authorization bypass vulnerabilities in Phase (PRs #722-731, accepted and merged). Others sat inside LLM infrastructure stacks like Langfuse and vLLM, the same plumbing most AI-native companies are building on.

The fixes shipped the same week they were reported, with tests that proved they worked. Not because the tool is magic. Because authorization bugs are deterministic once you look at data flow instead of syntax.

Lovable has a CISO, a SOC 2 Type II, an ISO 27001 certificate, a Security Checker, a partnership with a pen-testing firm announced seven days before this incident, and a 48-day-old HackerOne report for the most common API bug in the OWASP Top 10 sitting closed as a duplicate.

If the certificate doesn't keep that from happening, what does the certificate prove?

The part that should actually scare you

If you're a Lovable customer who had a project before November 2025, your source code, your AI chat history, and anything you pasted into a Lovable conversation should be treated as public until proven otherwise. Rotate every credential at its upstream provider, not just in the Lovable dashboard. Audit chat histories for secrets. Turn on RLS on every Supabase table. Assume exposure.

If you're not a Lovable customer, the lesson is smaller but stickier: a compliance badge on a trust page is not security research. Neither is a blog post titled "We don't just talk about security, we prove it." The thing that proves it is the platform surviving contact with a free-tier account making five API calls.

Lovable failed that test in March. They closed the ticket. They shipped the narrative.

The code wasn't secure. The platform wasn't secure. The SOC 2 Type II certificate didn't make it secure. The CISO didn't make it secure. The HackerOne program didn't make it secure. What would have made it secure is a tool that reads what the authorization code does, not what it looks like, on every PR, before the permissions unification in February ever shipped.

Semantic analysis isn't a marketing claim. It's the difference between a 48-day-old BOLA on your production API and a failing check on a pull request that never merged.

Lovable's platform is "SOC 2 Type II compliant." Every project created before November 2025 was also publicly readable by any free account. Both things were true at the same time.

That's Control Drift. And it's going to keep happening to AI-native companies until the industry stops treating compliance badges as a substitute for the kind of analysis that actually reads the code.

What would have prevented it is a tool that reads authorization logic on every PR and flags the handler that forgets to check ownership, before it ever reaches a HackerOne triager's queue.

There is no world in which a compliance framework catches that. There is also no world in which a pen-test catches it on day 365 of a 48-day exposure window. It gets caught on the PR or it gets caught by a researcher with a free account, and those are the only two options.

Lovable picked the second one.

kolega.dev — semantic analysis on every PR.